AI Agents Learnings

Notes from two videos on building AI agents practically, without the hype.

Sources: Part 1 — How to Build Effective AI Agents (without the hype) · Part 2 — Building AI Agents in Pure Python

What Are AI Agents?

The popular definition: a pipeline of automation that at some point calls an LLM API. But not all AI systems are AI agents. Anthropic draws a clearer distinction between workflows (predefined control flow) and agents (LLM-driven dynamic decisions).

The Anthropic blog post on building effective agents is the key reference here.

Patterns

| Category | Pattern |

|---|---|

| Building Block | Augmented LLMs — retrieval, tools, memory |

| Workflow | Prompt Chaining |

| Workflow | Routing |

| Workflow | Parallelization |

| Workflow | Orchestrator-Workers |

| Workflow | Evaluator-Optimizer |

Tips for Building Agents

- Be careful with agent frameworks. They get you running fast, but you won’t understand what’s happening underneath. Learn the primitives first — it makes you a better engineer.

- Prioritize deterministic workflows over complex agent patterns. Start simple. Understand the problem, look at all available data, categorize it, and solve it in a way that works 100% of the time before reaching for agents.

- Don’t jump from prototype to production. Classic path to hallucination chaos. Scale carefully.

- Build testing and evaluation systems from the beginning — not as an afterthought.

- Put guardrails on outputs. Before sending a response back to the user, have a second LLM check whether the answer is actually appropriate to send.

Building Blocks in Code

From Building AI Agents in Pure Python

You don’t need any fancy frameworks to build AI agents — the LLM provider APIs are enough. The first ~23 minutes of that video cover practical code for the four core building blocks:

- Memory — persisting conversation context

- Structured output — constraining model responses to a schema

- Retrieval — fetching external knowledge at inference time

- Tools — letting the model call functions

Workflow Patterns in Practice

Break down the problem the way a human would think about and approach it. A few notes:

- Parallelization is especially well-suited for guardrail checks — run safety evaluation in parallel with the main response rather than sequentially.

References

Andrew Ng: The Rise of AI Agents and Agentic Reasoning

Notes from Andrew Ng’s BUILD 2024 Keynote.

Source: Andrew Ng Explores The Rise Of AI Agents And Agentic Reasoning — BUILD 2024 Keynote

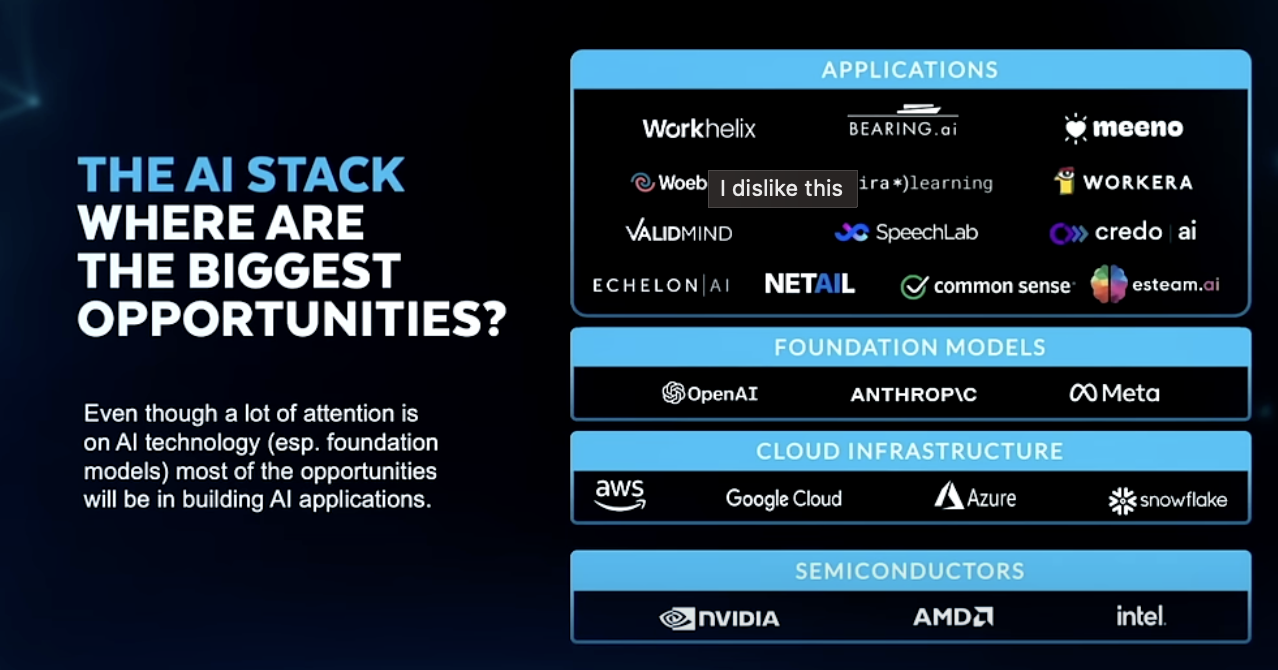

The AI Stack — Where Are the Biggest Opportunities?

Even though a lot of attention is on AI technology (foundation models), most of the opportunities will be in building AI applications.

- Generative AI is enabling fast ML product development

- Agentic AI workflows is the most important AI technology to pay attention to right now

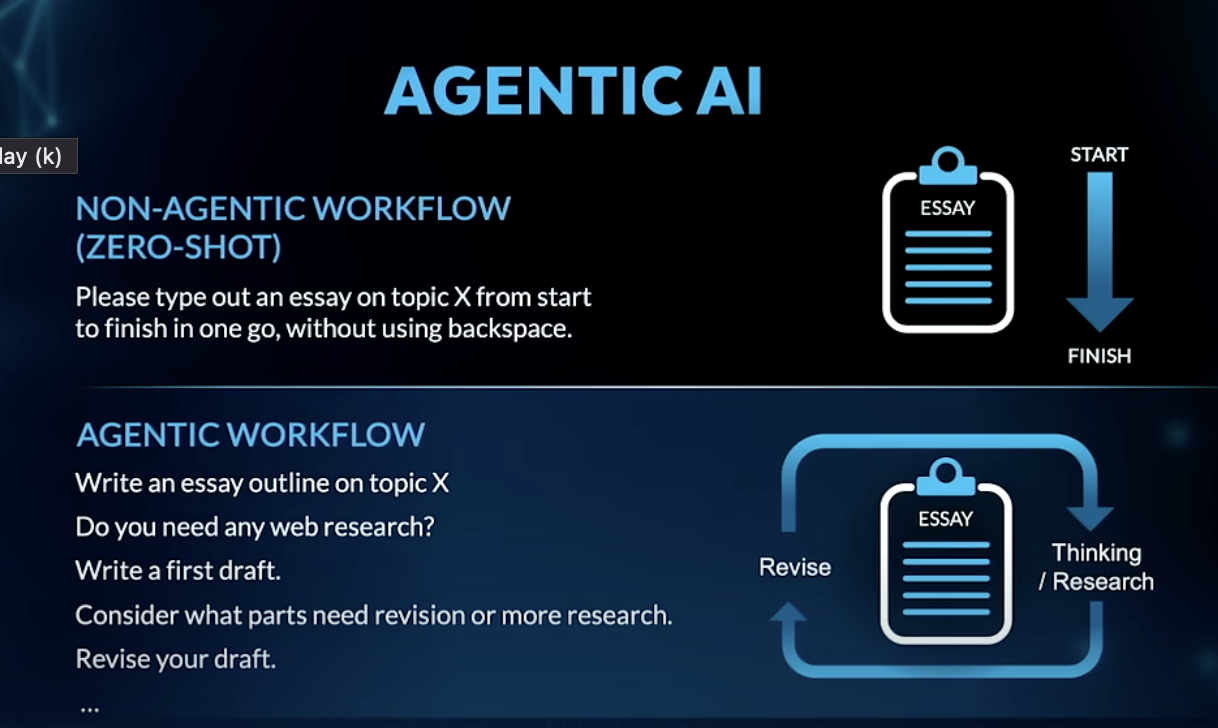

Agentic vs Non-Agentic Workflows

| Non-Agentic (Zero-Shot) | Agentic | |

|---|---|---|

| How it works | Single prompt, start to finish in one go | Iterative — outline → draft → research → revise |

| Analogy | Writing an essay without backspacing | Writing the way a human actually would |

The iterative loop (plan → act → reflect → revise) is what makes agentic workflows substantially more capable than single-shot prompting.

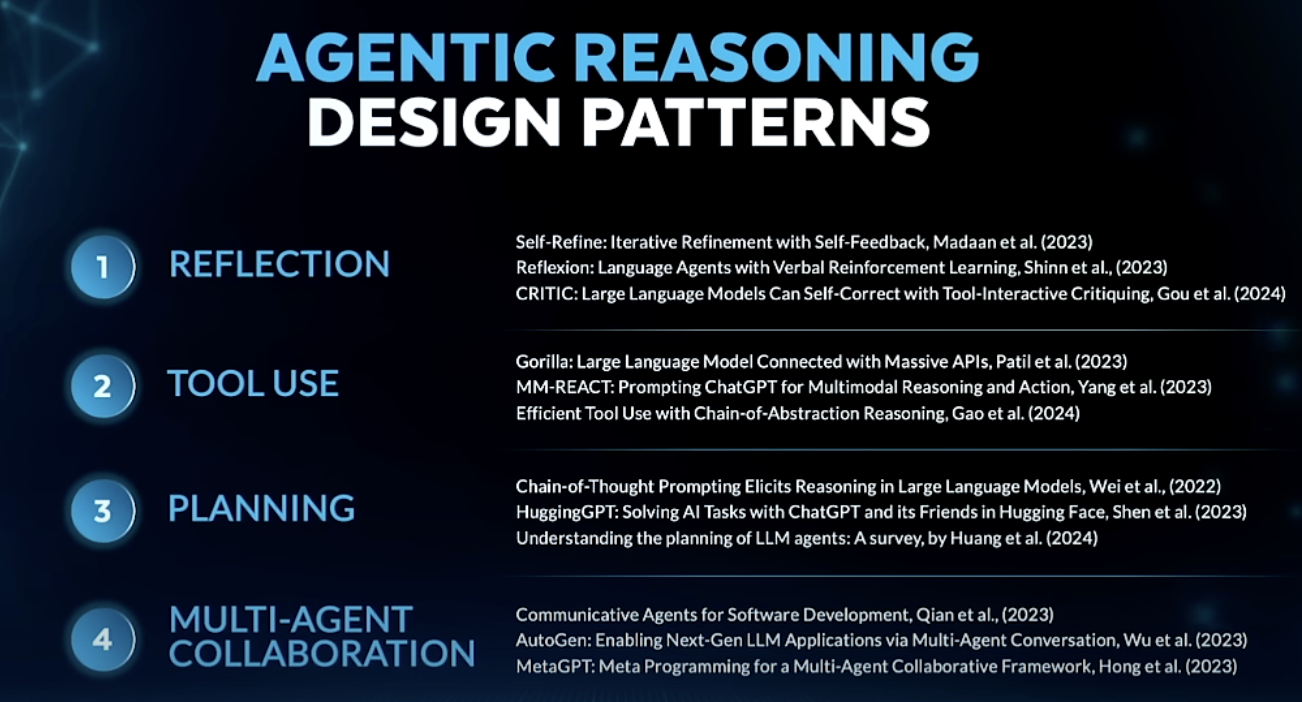

4 Agentic Reasoning Design Patterns

- Reflection — Model reviews and critiques its own output, then improves it

- Self-Refine, Reflexion, CRITIC

- Tool Use — Model makes API calls (web search, code execution, external data)

- Gorilla, MM-REACT, Efficient Tool Use with Chain-of-Abstraction

- Planning — Model decides on steps before acting; chain-of-thought drives task decomposition

- Chain-of-Thought Prompting Elicits Reasoning, HuggingGPT, Talking to Tasks

- Multi-Agent Collaboration — Multiple specialized agents communicate and divide work

- Communicative Agents for Software Development, AutoGen, MetaGPT

LMM — Large Multi-Model Workflows

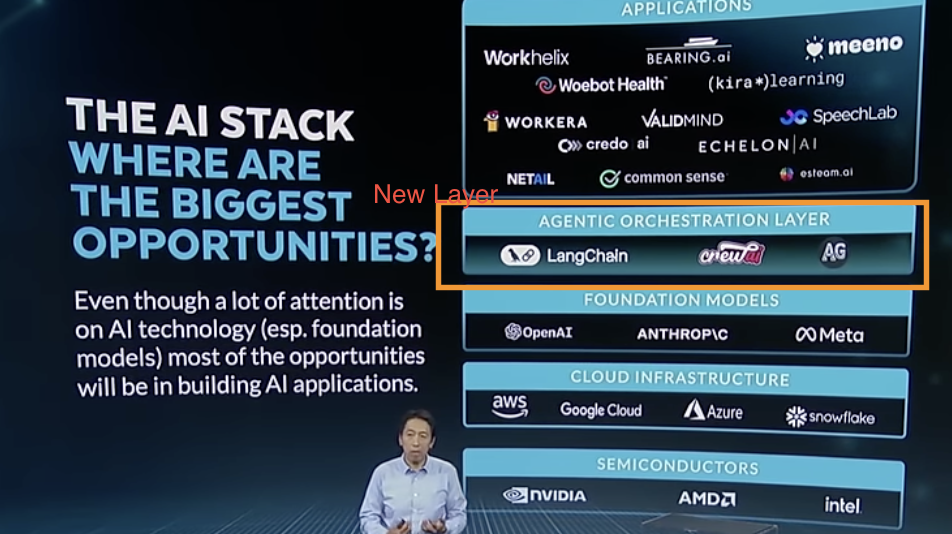

The AI Stack is gaining a new layer: an orchestration layer that coordinates across models, tools, and agents.

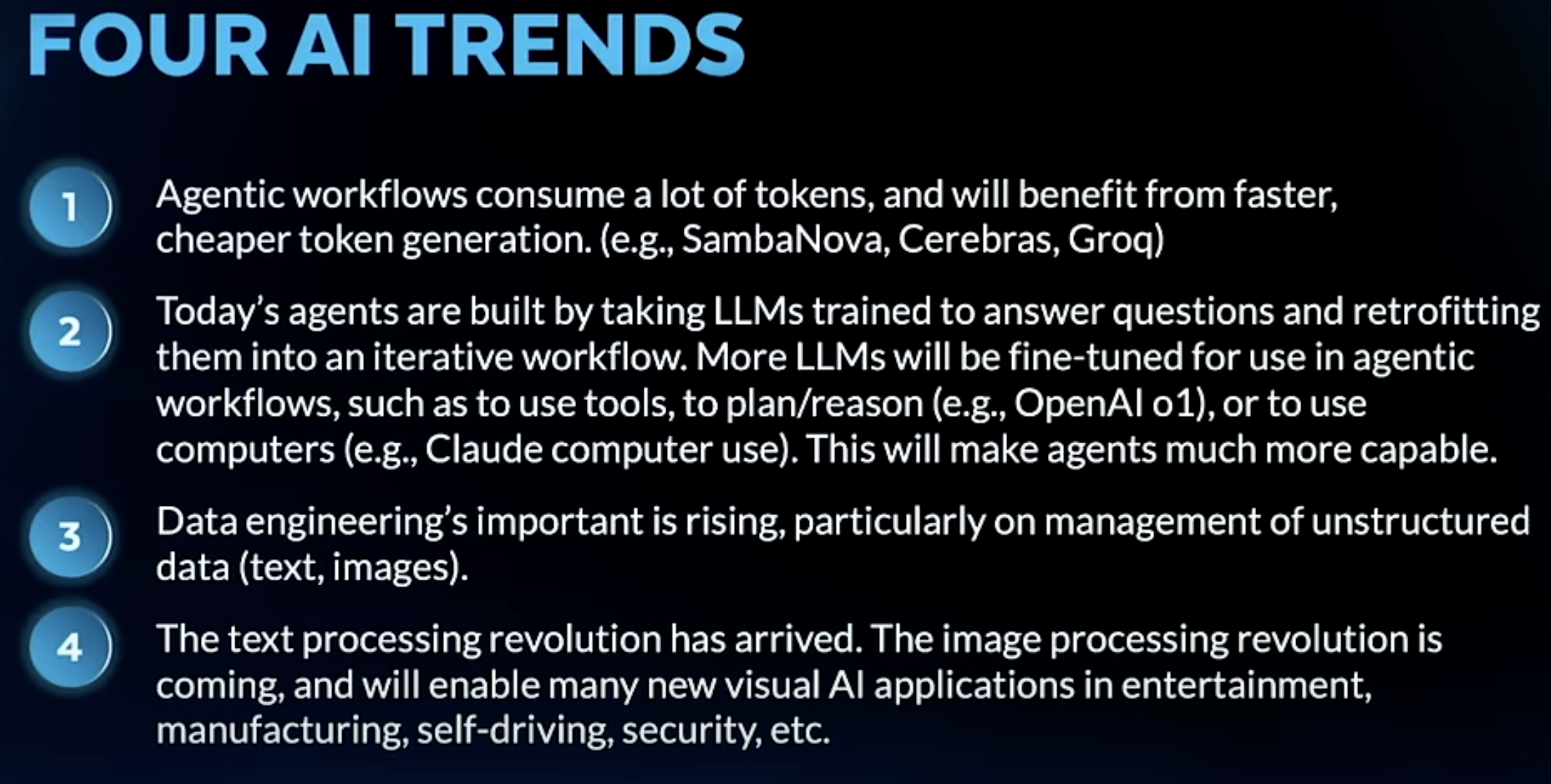

Four AI Trends to Watch

- Agentic workflows are token-hungry — will benefit from faster, cheaper token generation (SambaNova, Cerebras, Groq)

- Today’s agents = retrofitted LLMs — models trained to answer questions, then adapted into iterative workflows; future models will be fine-tuned natively for agentic use (tool use, planning, computer use)

- Data engineering is rising in importance — particularly unstructured data management (text, images)

- The text processing revolution is here; image processing is next — will unlock new visual AI applications in entertainment, manufacturing, self-driving, and security

What is a Coding Assistant — Anthropic Claude Code in Action

Notes from Anthropic’s Claude Code in Action course.

Source: Claude Code in Action — Anthropic SkillJar

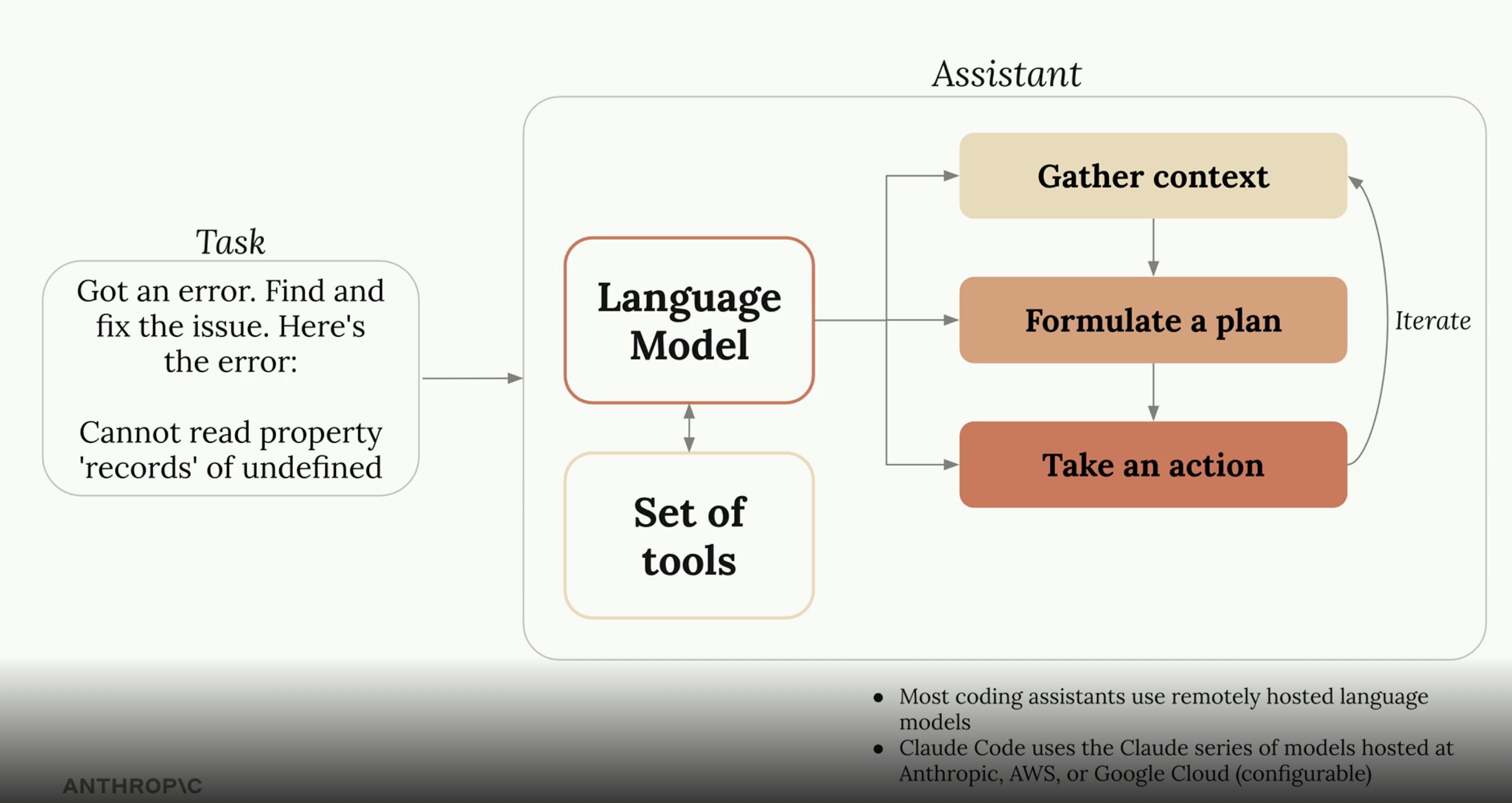

What is a Coding Assistant?

A coding assistant is a tool — it does whatever the model instructs it to do (e.g. read a file, run a command, edit code). The language model is the brain; the assistant is the hands.

The assistant loop:

- Gather context — read files, search code, understand the codebase

- Formulate a plan — decide what steps are needed

- Take an action — execute via tools, observe the result, iterate

Most coding assistants use remotely hosted language models. Claude Code uses the Claude series of models hosted at Anthropic, AWS, or Google Cloud (configurable).

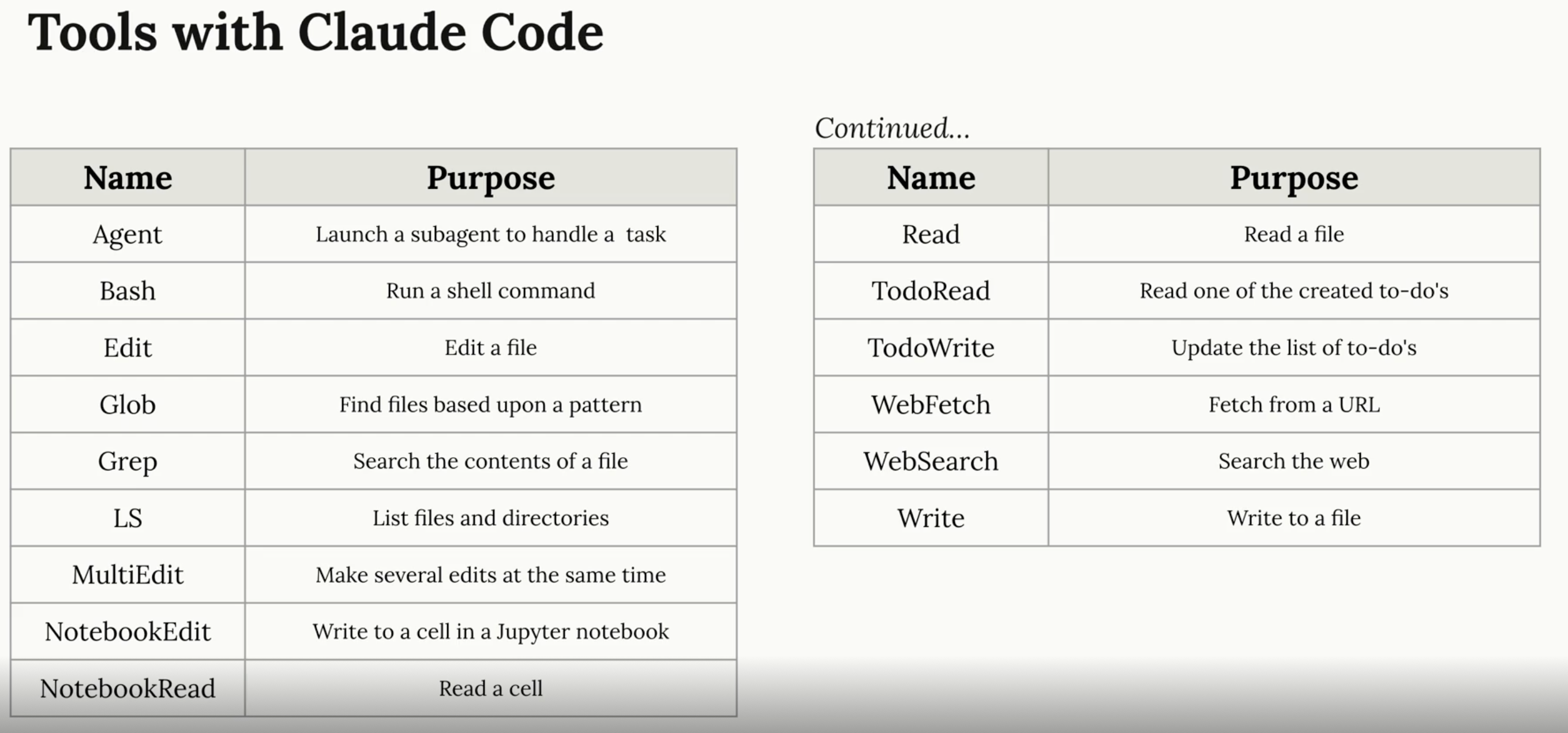

How Tools Work

Models are given plain text instructions describing what each tool does — e.g. ReadFile: main.go. The model then decides which tool to call and when. Claude’s models are particularly good at understanding tool descriptions and using them to complete tasks. The tool set is extensible — new tools can be added as needed.

Tools Available in Claude Code

| Tool | Purpose |

|---|---|

| Agent | Launch a subagent to handle a task |

| Bash | Run a shell command |

| Edit | Edit a file |

| Glob | Find files based on a pattern |

| Grep | Search the contents of a file |

| LS | List files and directories |

| MultiEdit | Make several edits at the same time |

| NotebookEdit | Write to a cell in a Jupyter notebook |

| NotebookRead | Read a cell |

| Read | Read a file |

| TodoRead | Read one of the created to-dos |

| TodoWrite | Update the list of to-dos |

| WebFetch | Fetch from a URL |

| WebSearch | Search the web |

| Write | Write to a file |

Andrej Karpathy: Software Is Changing (Again)

Notes from Andrej Karpathy’s talk on the three paradigms of software.

Source: Software Is Changing (Again) — Andrej Karpathy

Three Paradigms of Software

| Era | What you program | ~When |

|---|---|---|

| Software 1.0 | Explicit code — logic written by humans | ~1940s |

| Software 2.0 | Weights of a neural network | ~2019 |

| Software 3.0 | LLMs via prompts — natural language as code | ~2023 |

In Software 3.0, the program is the prompt. Writing instructions in plain English is now a legitimate way to program a computer.

How to Think About LLMs — The OS Analogy

The closest analogy for an LLM is an operating system:

- Closed source — GPT (Microsoft/OpenAI), Gemini (Google), Claude (Anthropic)

- Open source — LLaMA is the Linux equivalent

And just like with operating systems, it’s not only about the kernel (the model). The real story is the ecosystem — tools, modalities, integrations, and the platform that builds up around it.

Model Context Protocol (MCP)

Notes from the Large Language Model (LLM) Talk podcast — listened April 17, 2025.

The Problem

Powerful AI models exist, but connecting them to real-world data kept feeling like a brand-new integration project every single time. If you had M LLMs and N tools, you potentially needed M × N custom connectors — each built from scratch, every time.

What Is MCP?

Model Context Protocol is an open protocol introduced by Anthropic in November 2024. It creates a standardized way for applications to give LLMs context — a universal adapter so you never have to reinvent the wheel when connecting a new tool.

A few analogies:

- USB — one port standard, endless device support

- LSP (Language Server Protocol) — one protocol, any editor + any language

How It Works

At its core, MCP uses a client-server architecture with three key players:

| Player | What It Is |

|---|---|

| MCP Host | The AI app the user interacts with (e.g. Claude Desktop, a code editor plugin). Can connect to multiple MCP servers simultaneously. |

| MCP Client | Middleware that lives inside the Host. One client per server — keeps connections isolated so one failure doesn’t cascade. |

| MCP Server | A lightweight program outside the Host that exposes specific services, data, or tools via the MCP protocol. Can reach local files or remote APIs. |

Primitives — The Building Blocks

Client-Side Primitives

Roots

- Sets boundaries: which parts of the host system can the server access?

- The host tells the server: “You can only look in here” — prevents servers from wandering.

Sampling

- Reverses the usual client/server dynamic: the server can ask the client to generate text.

- Useful because servers typically don’t have direct LLM access — but clients do.

- The client stays in full control: it picks the model, can rate-limit, or reject suspicious requests.

Server-Side Primitives

| Primitive | Controlled By | Purpose |

|---|---|---|

| Tools | Model | Executable functions the LLM can call — giving the model hands. Real-time data, DB writes, triggering processes. |

| Resources | Application (Host) | Information for the LLM to work with — documents, tables, structured data. |

| Prompts | User | Pre-built templates for common tasks. User selects when to apply them. |

Tools are for taking actions. Resources are for providing information.

Under the Hood

MCP uses JSON-RPC 2.0 for all client-server communication — a lightweight, well-understood RPC format.

Why It Matters

- Ecosystem — a growing library of ready-made MCP servers means your AI tools can do more without writing custom glue code for every tool.

- Portability — switch LLMs without rewriting your tools.

- Agent-ready — MCP gives agents a consistent way to discover and use tools, enabling more sophisticated multi-step reasoning across systems.

- Scalability — build AI systems that are more powerful, secure, and robust by composing MCP servers rather than hardcoding integrations.

Summary

“MCP is like a universal adapter for AI. It uses a client-server architecture with hosts, clients, and servers all working together seamlessly. It defines primitives like tools for action, resources for information, and prompts for structured interactions. On the client side, roots enforce security and sampling gives precise control over LLM text generation. All of this is designed to solve the dreaded M×N integration problem and create a more flexible, secure, and powerful AI ecosystem.”